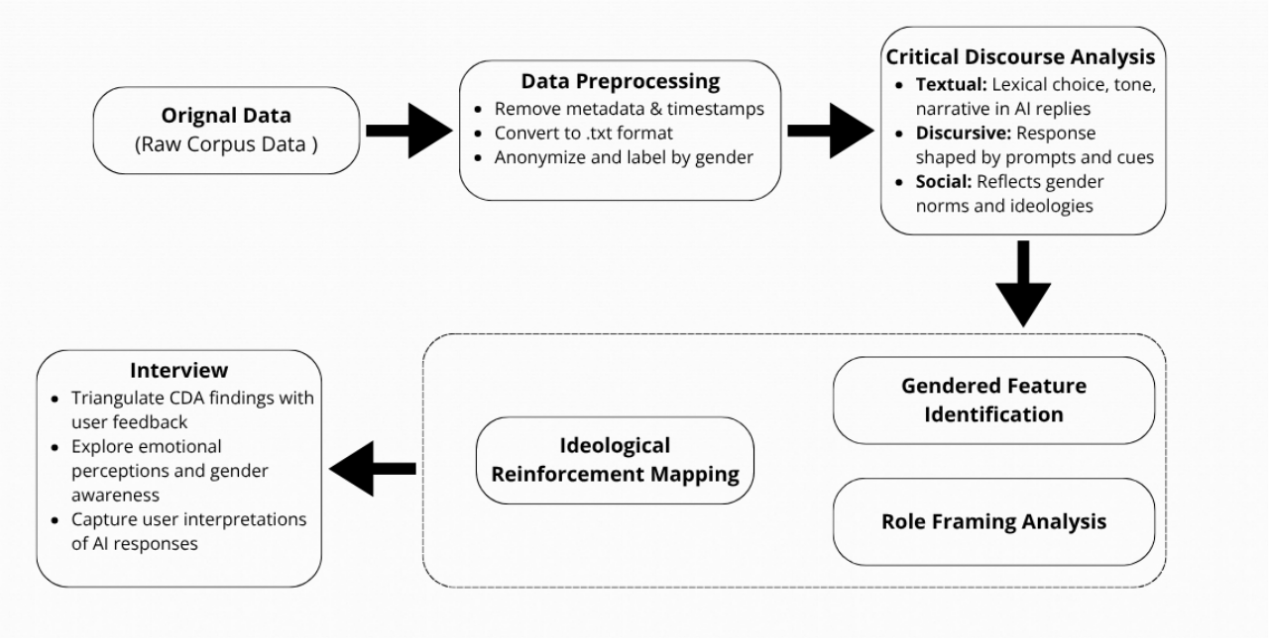

As generative AI is increasingly integrated into emotionally intimate contexts, concerns about its reproduction of gender bias are growing. While existing scholarship has extensively explored static biases in dataset and model design, few studies have explored how gender stereotypes evolve and are reinforced through dynamic human-computer interactions. This study examines how emotionally sustained conversations with an AI agent (e.g., ChatGPT) gradually stabilize and amplify symbolic gender roles through ritualized discourse patterns. Drawing on the Computers as Social Actors (CASA) paradigm and Interactive Ritual Chaining (IRC) theory, this study explores how users co-construct relational expectations with AI systems over time. Using a two-stage corpus design containing eight participants, we compared lexical frames and emotional tones in the pre- and post-phases of intimate interaction. Results suggest that the AI's responses increasingly conformed to normative gender roles: women were positioned as emotional receivers, while men were shaped as resilience providers-even when expressing similar emotional needs. These findings highlight that dynamic biases are not only deeply ingrained, but also reinforced by the way they are interacted with, creating new ethical challenges for relational fairness in AI communication. By shifting the focus from static design issues to ongoing dialogic reproduction of gender meaning, this study contributes to a deeper understanding of algorithmic bias in virtual companionship.